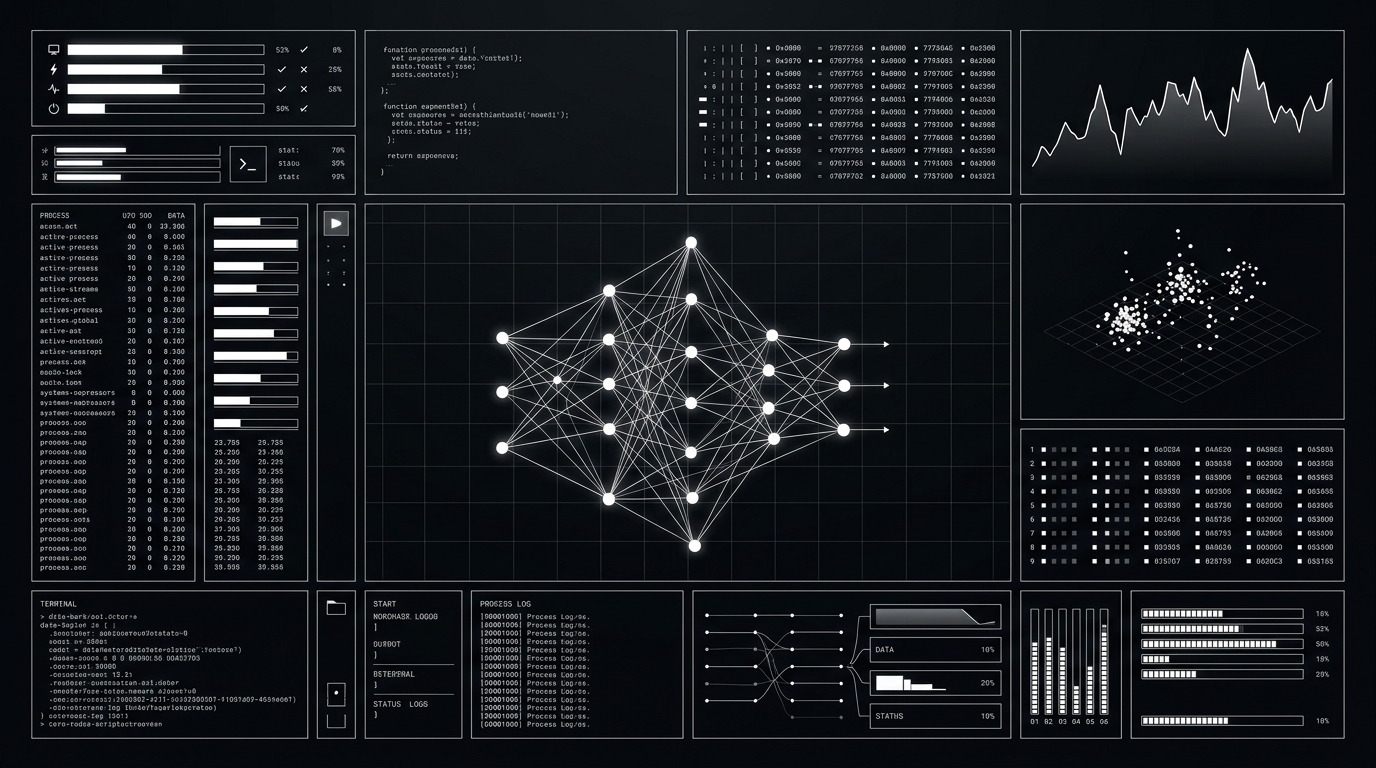

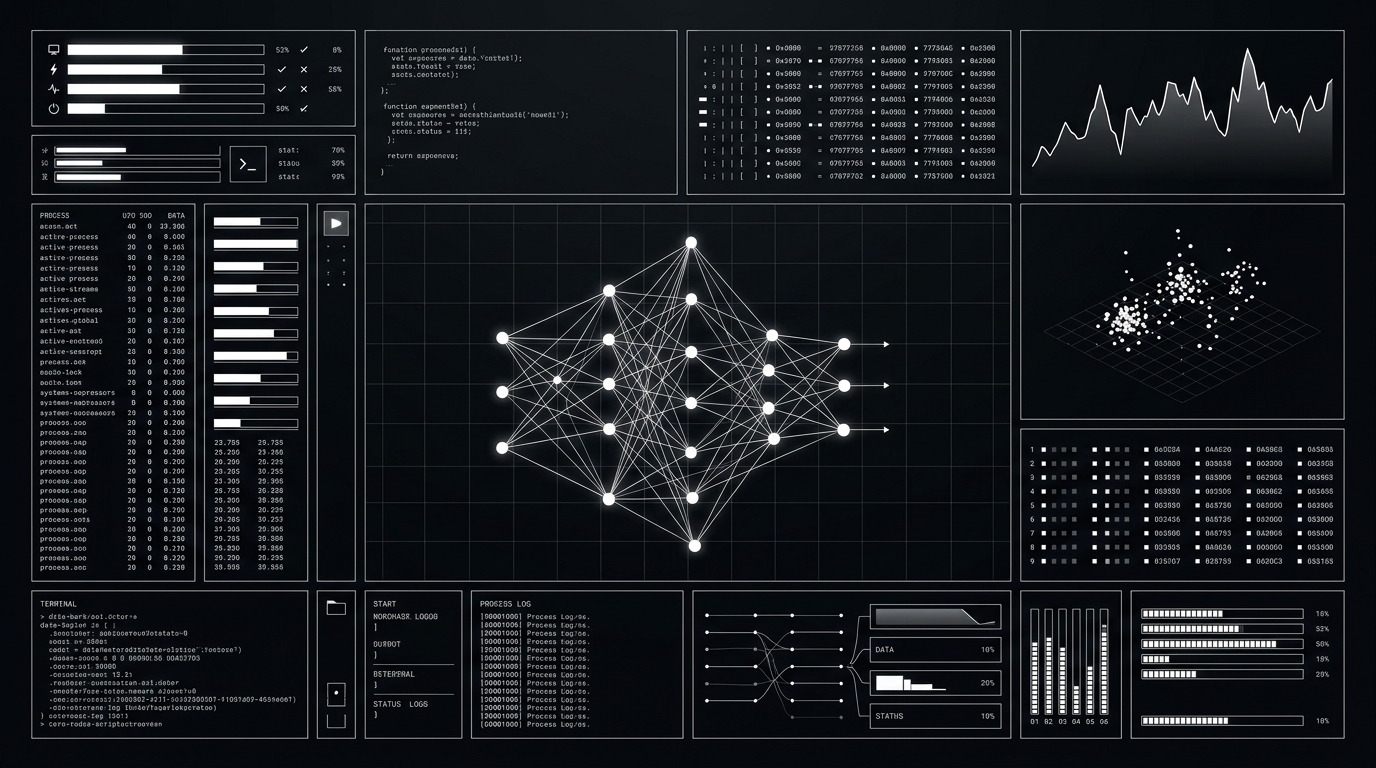

PRODUCT

NEXUS PLATFORM

INFERENCE METRICS

1.2M

TOKENS / SEC

99.7%

UPTIME

4.2ms

P50 LATENCY

47B

PARAMETERS

Frontier AI Research Laboratory

We are hiring researchers and engineers who want to build intelligence that matters. Explore open roles and research partnerships.